Teaching AI Literacy

Best practices in teaching the basics of AI to your workforce.

How many AI intro courses are there out there these days? It seems like everyone and their brother has one, right?

Thousands. There are thousands of them.

There are so many AI intro courses right now that even the agents I sent scouring the web are having a hard time quantifying them. Between universities, online learning platforms, consulting firms, tech companies, YouTube creators, and corporate L&D programs, the number is huge, and it’s growing monthly.

But the question is whether or not any of these are any good, and should you be investing in a course to get started with AI?

Well…eh. No. Maybe. Depends on your needs. You don’t need a special expensive course to learn about AI when there are tons of resources that can teach you about the science of AI. However, when we’re talking about the practice of AI, then it helps to have a guide.

Most AI courses fall into one of the following buckets:

Technical foundations: math, modeling, coding—if we go back to our “AI is a car” analogy, this teaches you mechanical engineering and internal combustion

Business/executive overviews: a very short intro that reads about like my “The Basics of AI” article last month (and yet still convinces executives they’re experts)

Hands-on tool use: usually generative AI focused, teaching people how to create good prompts and do useful things with the results

Ethics and governance: what are the rules of the road, or how do we create and who should create traffic laws for these AI cars we’re now all driving

So…how do I know what the good courses are?

A good intro course should:

Focus on concepts more than tools

Explain probabilistic thinking

Clarify limitations, roles, and functions

Distinguish AI from automation

Provide practical ways to integrate AI and human judgment

What I recommend, if you want to learn AI or if you want your workforce to learn AI, you take a concept course and then use AI daily in your routine for 30 days (I have a prompt in my last article that will help you figure out how to do that). Then create or join a community of practice to develop use cases, test solutions, study governance and decision rights, and grow together.

That combination will outpace most certification courses. Yes, you’ll still want some technical experts around in an advisory capacity, but not everyone in your organization needs to be a technical expert. Which means…there’s a lot of this you and your L&D teams can teach yourselves.

Let’s decode it. 🚀

Teaching AI Literacy

Focusing on the practice of AI more than the science of AI.

The single fastest way to fail at AI literacy is to center on the tool. AI literacy is less about tool training and more about concepts. There’s a lot of foundational information people need to understand to use AI effectively that has nothing to do with the tool.

Instead, if we’re looking for useful practical applications, we need to start more along the lines of these questions:

“What decision are we trying to improve?”

“What question are we trying to answer?”

“What data informs that question?”

“Where could AI assist?”

When we implemented Army Data Literacy, we didn’t start with the data. We started with what question or questions we were trying to answer, and then led into an exploration of the kinds of data out there and how to analyze them all with an aim back to supporting that question.

So let’s tweak that architecture for the design of an AI literacy course:

What decision must be made?

What question must be answered to make that decision?

What information do we need?

What data produces that information?

How do we interpret it responsibly?

What action follows?

I know, I know. You’re going to tell me that I don’t use AI anywhere in that architecture. That’s because we don’t want to have AI just for the sake of having AI—we want to figure out where AI can intelligently accelerate pieces of that chain.

And most of that acceleration starts happening at Step 3—bringing together and consolidating the information we need to answer our question and make our decision.

That’s the problem.

If you skip the first two steps—decision and question—you end up with outputs that might have nothing to do with your outcomes.

That leads me to our first best practice.

Best Practice #1: Teach people to start with the question.

AI is really good at helping you answer well-formed questions, when it understands the role, the parameters, and what constitutes a good answer in context of the question. And it can spiral pretty far out there when answering poorly-formed ones.

In AI literacy training, spend some serious time:

Mapping the decision context

Framing the problem precisely

Identifying assumptions

Defining constraints

Before anyone touches a tool, ask:

Who is the decision-maker?

What risk is attached?

What would a wrong answer cost?

What does “good” look like?

This helps minimize your trouble—and helps keep the tool you’re using from spinning off in a probabilistic direction beyond the constraints that maybe you forgot to tell it about. Oops.

Best Practice #2: Teach probabilistic thinking.

Everyone loves probabilities, don’t they?

I didn’t, not after working with probabilistic design for most of my grad school and thesis experience. Which I think is why my program director made me teach stochastic modeling back when I was an AP at West Point. The side effect was that I got to learn a lot about probability.

You have to think in terms of probabilities to understand how AI works, not deterministic equations.

What do I mean?

Well, there’s no gonkulator in AI where y = mx + b. Or if there is, m, x, and b are all variables with a range between some number m1 to mz, x1 to xz, etc. and that range may or may not follow some kind of an organized distribution.

AI systems don’t provide certain answers. They run the probabilities until they get something that checks out mathematically as being close enough to your desired answer.

Think about how Pandora or Spotify recommend songs to you that you might like, or how Amazon tells you that based on your previous purchases, you absolutely need to add this one thing to why is that in my cart already? You get the idea. The recommendation engines that power those functions use the same probabilistic math that you find in generative AI tools, and you train it by interacting with it. Thumbs up or down on Pandora or Spotify, providing feedback in further prompts to GenAI.

If you want a single input going into a single workflow to a single function, that’s not AI—that’s automation.

If you want it to generate options and adjust those options based on new introduced data (or interactions), then you need AI.

So ditch the idea that y = ax^2 + bx + c and instead understand that you have some outputs that are going to go into a function with a range of possible modifiers and be run a whole heck of a lot of times until the machine decides that the answer is statistically close enough to what you’re looking for to release an outcome.

There is no “right.” There’s just “close enough.”

And if you’re a glutton for punishment, you can read more about probabilistic design in this article I authored after I finished my thesis for International Transactions on Operations Research.

Best Practice #3: Start with data foundations.

Even if you have a probabilistic engine doing its best to produce an answer statistically close enough to what you want, it’s going to have a really hard time doing that if you feed it the wrong information.

Bottom line—if your data is poor, your AI won’t work.

There’s a reason we harp on research methods classes in higher education. There’s a reason we collect certain data in certain ways, and you should understand what data you have, how it was collected and for what purpose, and how to gauge the quality of that data before you start feeding it into your AI tools.

You don’t have to create a stable of data engineers for this, just include elements of data literacy training so that everyone understands the basics of data and how it’s used before they start trying to put it into AI.

If you want some good resources for data literacy, I highly recommend my friend Jordan Morrow’s book, Be Data Literate, and Arizona State University’s Study Hall Data Literacy video series on YouTube. These are great tools to get started and can help you get your vocab built before you dive into other courses and platforms.

Hmmm…maybe I need to get the gang together to write “How to teach data literacy.”

Best Practice #4: Teach judgment and evaluation.

Prompting is the easiest part of interacting with AI to teach. Judgment and the evaluation of solutions is much harder.

So you’ve got to include topics like:

When to trust outputs (and how you know)

When to escalate

When to override

When not to use AI at all

This is best to do through practical exercise, where you can put students through the paces of things like:

Identifying hallucinations and “lie” responses (did it give you a link that goes to something totally unrelated to the question?)

Spotting subtle bias

Comparing AI-generated output to human-generated output

Stress-testing recommendations

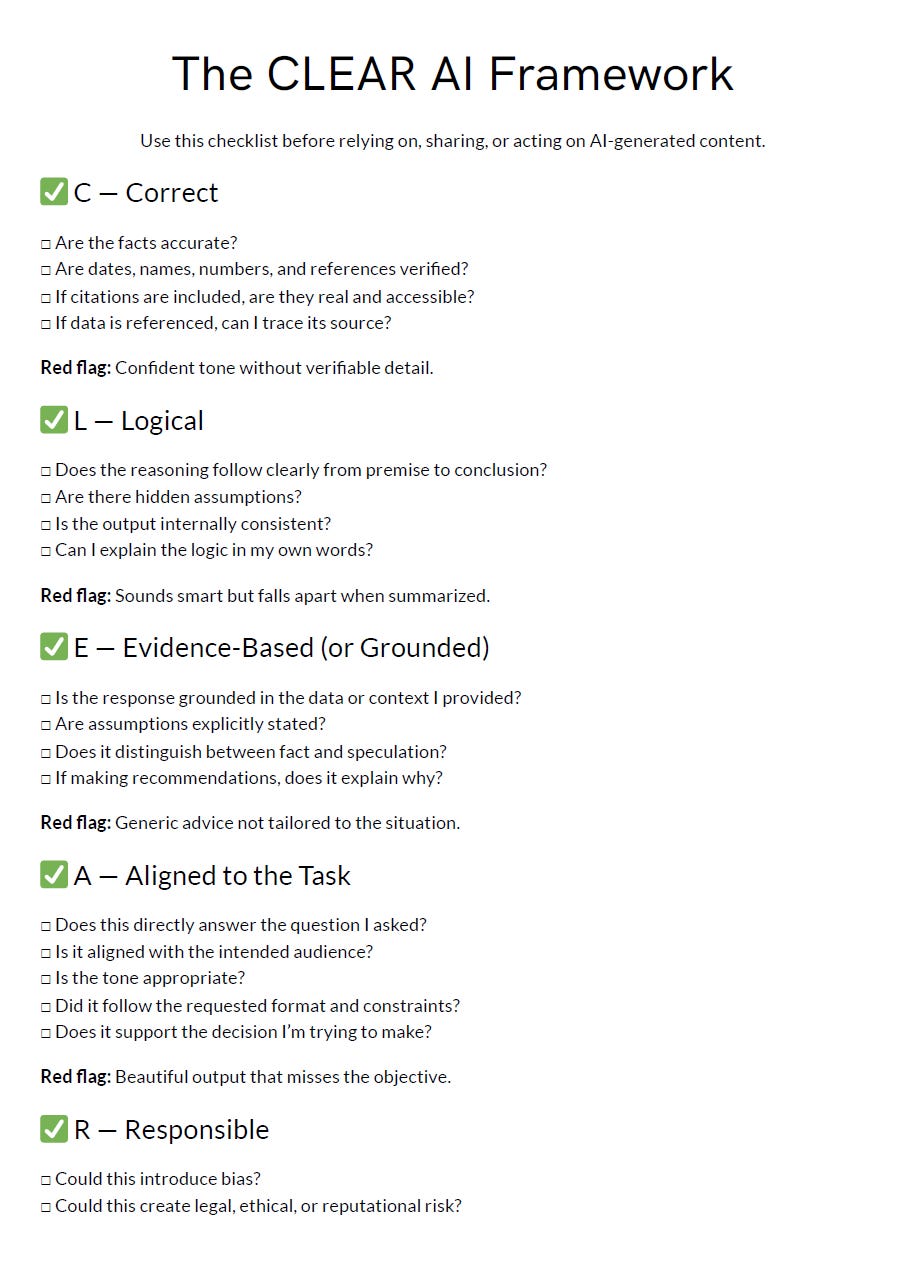

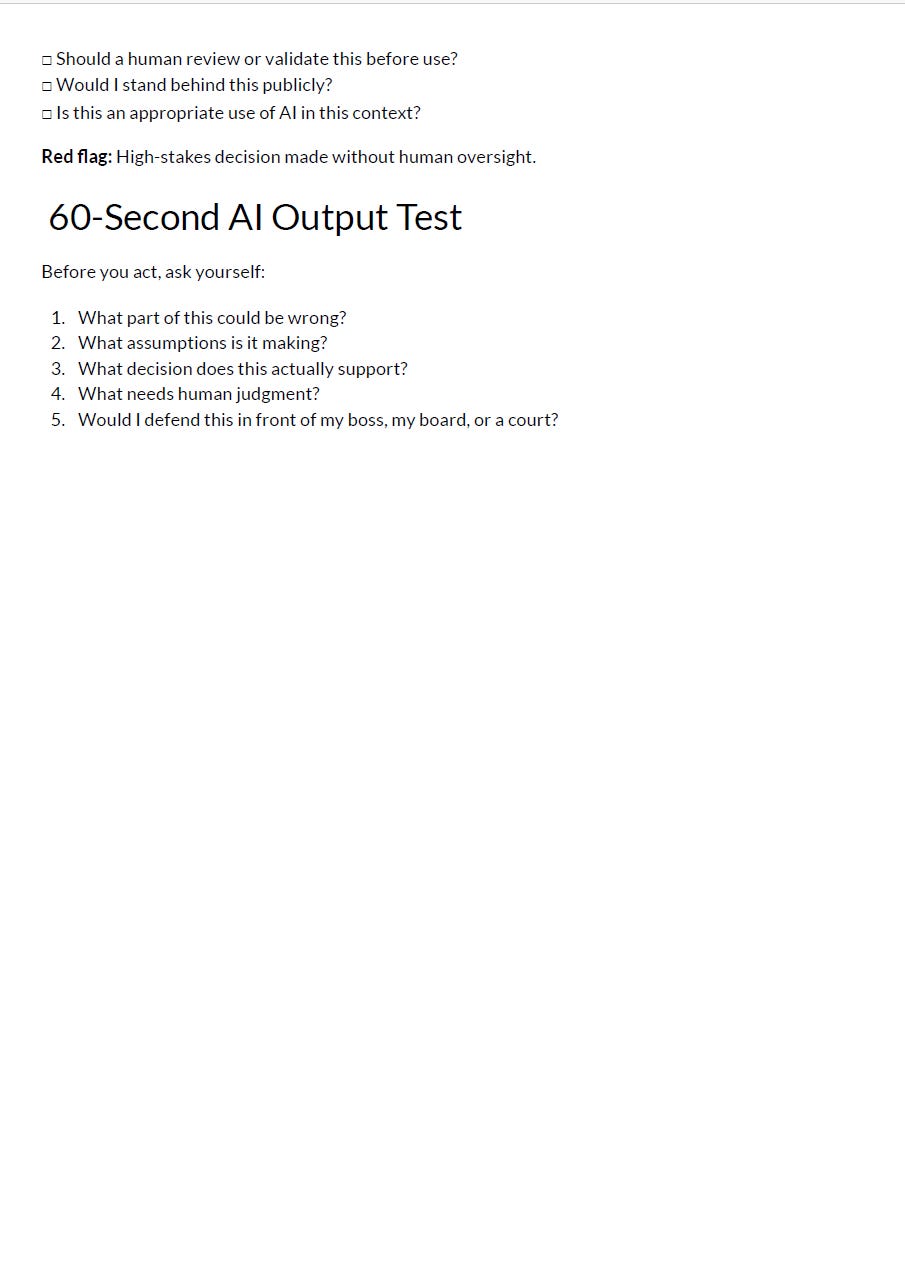

Show lots of examples of responses, and have students evaluate them. I like to use what we call the CLEAR test. Is it:

C - correct

L - logical

E - evidence-based

A - aligned to the task

R - responsible

Check the bottom of the article for a printable form that helps you break that down.

Best Practice #5: Make it role specific.

Generic AI literacy fails because it doesn’t really help you bridge from the academic to the practical application of AI. To be practical, you have to bring in domain expertise and put the person in their role.

Here are some examples of what I mean:

A recruiter should practice:

Resume summarization

Job description refinement

Candidate comparison logic

A workforce planner should practice:

Scenario modeling prompts

Skill adjacency mapping

Attrition risk framing

A policy professional should practice:

Comparative language analysis

Compliance extraction

Executive briefing summarization

Literacy sticks when it touches real work.

Best Practice #6: Normalize experimentation.

AI literacy isn’t a one and done certificate. If anything, it’s the start of building good scientific and practical habits around your tools.

Encourage curiosity, weekly experimentation and sharing experiments and use cases internally. Open discussion of successes and failures. Share prompt libraries across teams—you can divide these into threads for work prompts, home efficiency, travel, just for fun, you name it.

The organizations that advance fastest treat AI use as a learning culture, not a one and done certificate.

Your training will be successful if…

Your workforce becomes:

Clear thinkers

Better question framers

Data-aware professionals

Responsible decision-makers

Confident designers of human–AI systems

Just like the car example, you don’t just need one kind of expertise. For cars, you need engineers to build them but also mechanics to keep them running, roadside service to help you with repairs in a pinch, folks who write the traffic laws and police the roads from harmful behaviors, and, more than anything, educated responsible drivers.

AI is the same. Unless you’re running an AI company, your AI literacy program shouldn’t be about making auto engineers or dig into the details of internal combustion engines, but about making those educated responsible drivers.

Drivers need a working knowledge of how to operate their tools to get from Point A to Point B faster and more efficiently than they would if they were walking or taking a horse and buggy, but unless they know where Point A or Point B are, it’s driving just for driving’s sake (which is fun, but probably not what you’re looking for).

So if you want to teach AI literacy well…

Start with the decision

Go to the question

Talk about the data

Then, only then, introduce the tool.

AI is powerful, but the real leverage comes from having a workforce that knows what to ask, and what to do with the answer.

(Scroll to the bottom for that printable!)

Here’s that printable checklist for the CLEAR framework I mentioned earlier! These are screenshots from my PDF, which I’ll try to figure out how to hang on my page!